Once you start using your Raspberry Pi cluster for workloads with persistence, you’re probably going to want to implement a decent Kubernetes backup strategy.

I have been using my Raspberry Pi Cluster for a number of workloads with persistence requirements, from WordPress sites with MySQL databases, to Minecraft servers.

This post will detail how to get a basic install of Velero up and running on an ARM based Raspberry Pi Kubernetes cluster that uses NFS volume mounts for container persistence.

In other words, a solution that will allow you to backup both your Kubernetes resource states, and the storage that those resources are mounting and reading/writing from and to.

First though, I’ll revisit how I have been backing up my Pi cluster up until recently.

A Simple and Naive Backup Approach

One way of dealing with backups is a fairly brute force, copy data off and upload to another location method. I’ve been using this for a while now, but wanted a more Kubernetes-native method as an extra backup.

I have had a cronjob that does a mysql dump of all databases and then gzips and uploads this to BackBlaze B2 object storage.

Another cronjob handles compression and upload of a variety of different NFS volumes that pods mount. This cronjob runs in a BSD jail on my FreeNAS storage server, where the same Kubernetes NFS storage is mounted to.

You can see the details of how I do this in my Cheap S3 Cloud Backup with BackBlaze B2 post.

Kubernetes Backup of State and Volumes with Velero

Velero is nice in the way that it is pluggable. You can use different plugins and adapters to talk to different types of storage services.

It works well enough to backup your pod storage if you’re running Kubernetes on a platform that where that storage runs too. For example, if you’re running Kubernetes on AWS and using EBS persistent volumes. Another example would be VMware with vSAN storage.

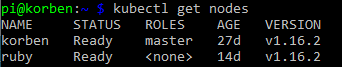

But in this post we’re dealing with Kubernetes on Raspberry Pi using NFS shared storage. If you haven’t yet setup shared storage, here is a guide to setting up NFS storage on Raspberry Pi Kubernetes.

We’ll assume you’re also wanting to backup your state and pod volume storage to AWS S3. You can quite easily modify some of the commands and use S3 API compatible storage instead though. E.g. minio.

Install Velero

On a management machine (where you’re setup with kubectl and your cluster context), download Velero and make it executable. I’m using a Raspberry Pi here, so I’ve downloaded the ARM version.

curl -L -O https://github.com/vmware-tanzu/velero/releases/download/v1.5.1/velero-v1.5.1-linux-arm.tar.gz tar -xvf velero-v1.5.1-linux-arm.tar.gz sudo mv velero-v1.5.1-linux-arm/velero /usr/local/bin/velero sudo chmod +x /usr/local/bin/velero

Create a dedicated IAM user for velero to use. You’ll also setup your parameters for S3 bucket and region, and add permissions to your IAM user for the target S3 bucket. Remember to change to use the AWS region of your preference.

Now you’re ready to install Velero into your cluster. Apply the magic incantation:

velero install --provider aws --plugins velero/velero-plugin-for-aws-arm:main --bucket $BUCKET --backup-location-config region=$REGION --secret-file ./credentials-velero --use-restic --use-volume-snapshots=false

There are a few things going on here that are different to the standard / example install commands in the documentation…

- The plugins parameter specifies the ARM version of the Velero AWS plugin. I found that the install command and the usual velero-plugin-for-aws selection tries to pull down the wrong docker image (for x86 architecture). Here we specify we want the ARM version from the main branch.

- Use Restic Integration. This enables the Restic open source integration for persistent volume backup. This is the magic that allows us to backup our NFS mounted volumes. Also handy if you happen to be using other file storage types like EFS, AzureFile, or local mounted.

- Disable volume snapshots. We’re on a Pi cluster with NFS storage, so we don’t want to use volume snapshots at all for backup.

After the Velero install completes, you should get some output to indicate success.

Velero is installed! ⛵ Use 'kubectl logs deployment/velero -n velero' to view the status.

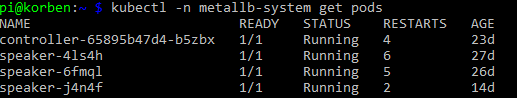

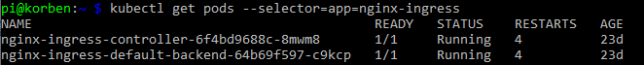

You should also see your pods in the velero namespace up and running for Velero and Restic.

kubectl -n velero get pods NAME READY STATUS RESTARTS AGE restic-589rm 1/1 Running 0 7m29s restic-g6swc 1/1 Running 0 7m29s velero-555695d95-dbzmd 1/1 Running 0 7m29s

If you see pod initialization errors, then double check you didn’t specify the normal velero plugin for AWS that would have caused the incorrect docker image architecture to be used.

With that complete, you’re ready to run your first backup.

Initiating a Manual Backup

Request a backup with the velero backup command. In the example below I’ll target my demo namespace. I’ll get volume backups for everything in this namespace that uses supported persistent volumes with the --default-volumes-to-restic flag.

If you don’t specify that flag, all backups will be opted-out by default for volume backups. That is, you opt-in to restic volume backups with this flag.

velero backup create demo-backup --include-namespaces=demo --default-volumes-to-restic

You can request backup state and details using the velero backup describe command.

velero backup describe demo-backup --details

It’s worth running a backup of a test namespace with some test workloads. Then simulate failure by deleting everything, followed by a velero restore.

This should prove the backup process works 100% and the DR strategy to be sound.

Don’t forget to do ocassional test DR scenarios to exercise your deployed backup solution!

Conclusion

It’s a good idea to backup your Kubernetes cluster once you start running services with persistence. Even if it is just for personal use.

A quick and dirty approach is to script out the export and backup of Kubernetes resources and mounted container storage. In the long run however, you’ll save time and get a more fully featured solution by using tools that are specifically designed for this use case.